Confirmation Bias in Crisis Management

Confirmation bias - the tendency to favour information that aligns with existing beliefs - poses a serious challenge during crises. It affects leaders at every stage of decision-making, from assessing situations to executing plans, often leading to flawed strategies and missed opportunities. This cognitive shortcut, exacerbated by time pressure, uncertainty, and organisational dynamics, can derail even experienced professionals.

Key insights include:

- Impact on Decision-Making: Leaders often stick to initial assumptions, ignoring contradictory evidence, which distorts situational awareness and undermines strategy.

- Examples of Failures: Cases like the UK's delayed COVID-19 response and the 2003 Iraq War illustrate how confirmation bias can lead to severe consequences.

- Organisational Factors: Rigid hierarchies, insular decision-making, and time constraints amplify bias, stifling dissent and critical thinking.

- Countering Bias: Structured tools (e.g., pre-mortem analysis, decision matrices) and fostering open dialogue can help leaders challenge assumptions.

To navigate crises effectively, leaders must focus on building systems that prioritise diverse perspectives, critical thinking, and evidence-based decision-making. Organisations should invest in training, create space for dissent, and adopt decision-making frameworks to minimise the influence of bias.

How Confirmation Bias Reduces Business Profits

sbb-itb-ce676ec

How Confirmation Bias Damages Crisis Response

How Confirmation Bias Damages Crisis Response: Three Critical Stages

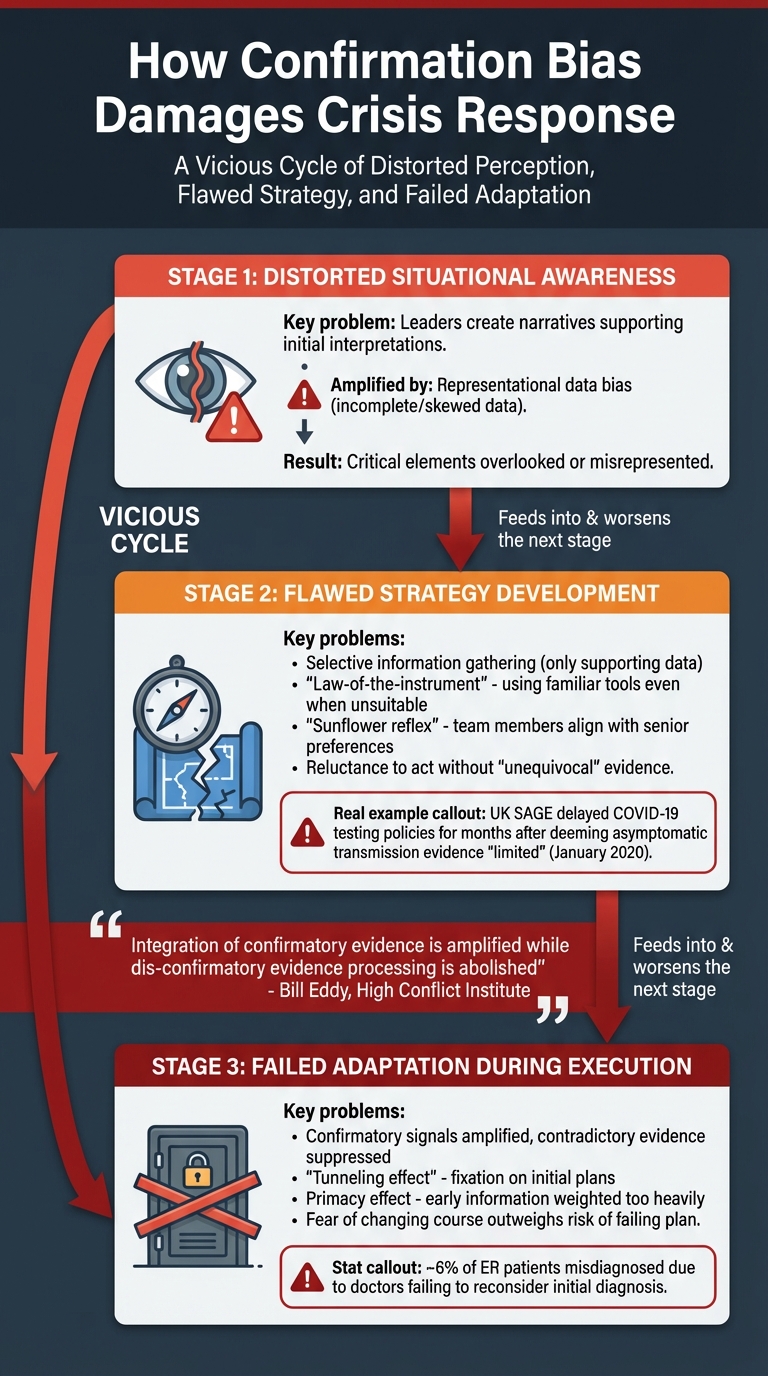

Confirmation bias undermines crisis management at every stage - assessment, planning, and execution. Leaders often favour data that aligns with their initial assumptions, while disregarding evidence that challenges these views. This creates a "vicious cycle", where decisions based on incomplete or flawed information reinforce the same biases, making it harder to adjust as the crisis unfolds.

The problem is compounded by a tendency to prioritise speed over accuracy. In high-pressure situations, the urgency to act often overrides the need for careful evaluation, leaving blind spots unaddressed. This rush to decide can have severe consequences for the effectiveness of crisis response.

Distorted Situational Awareness

Confirmation bias first affects how leaders perceive the crisis itself. Instead of forming a full and accurate understanding, they create a narrative that supports their initial interpretation. This issue becomes even more serious when combined with "representational data bias" - a situation where the available data is already incomplete or skewed. Rather than compensating for these flaws, confirmation bias amplifies them.

As a result, leaders may believe they have a comprehensive grasp of the situation, while critical elements of reality are overlooked or misrepresented.

Flawed Strategy Development

When situational awareness is compromised, the strategies that follow are inherently unstable. During planning, confirmation bias leads to selective information gathering - leaders focus on data that supports their assumptions and dismiss evidence to the contrary.

This behaviour often triggers the "law-of-the-instrument", where responders rely on familiar tools and protocols, even when they are unsuitable for the specific crisis. Another common issue is the "sunflower reflex", where team members align their decisions with senior stakeholders' preferences, sidelining alternative approaches.

Perhaps the most dangerous effect of confirmation bias is a reluctance to adopt precautionary measures without "unequivocal" evidence. This delay can have catastrophic outcomes in crises involving exponential growth, such as pandemics. For instance, in January 2020, the UK's Scientific Advisory Group for Emergencies (SAGE) reviewed evidence of asymptomatic COVID-19 transmission but deemed it "limited." As a result, the UK Department of Health and Social Care delayed updating testing and isolation policies for months. Such inflexibility in strategy makes it harder to adapt as new information emerges.

Failed Adaptation During Execution

Even when new evidence becomes available, confirmation bias can prevent leaders from revising their plans. A cognitive mechanism strengthens confirmatory signals while suppressing conflicting ones. Bill Eddy of the High Conflict Institute explains:

Integration of confirmatory evidence is amplified while dis-confirmatory evidence processing is abolished.

This "tunnelling" effect, where leaders fixate on initial plans, is often more pronounced in highly intelligent individuals. As science writer David Robson notes:

More intelligent people are often better able to find the evidence that supports their point of view, and they're also better able to rationalise any evidence that doesn't match their existing point of view.

The primacy effect further entrenches these biases, as information received early in a crisis carries disproportionate weight. Later, more accurate data is often ignored or undervalued. For example, U.S. emergency room studies show that around 6% of patients are misdiagnosed because doctors fail to reconsider their initial diagnosis or seek contradictory evidence.

Leaders may also avoid asking difficult questions during execution, fearing the immediate cost of changing course more than the uncertain risks of persisting with a failing plan. Restructuring expert Tyrone Courtman observes:

We tend not to ask a question if we think we might not like the answer.

Without deliberate efforts to counteract these biases, even compelling new evidence is unlikely to prompt necessary changes. This avoidance of uncomfortable truths can keep flawed strategies in place long after they should have been abandoned.

Organisational Factors That Amplify Confirmation Bias

The culture and structure within organisations can either curb or exacerbate confirmation bias, particularly during crises when leaders' judgement is under pressure.

Rigid Hierarchies

Structural hierarchies within organisations can significantly influence decision-making. In rigid hierarchies, dissent is often stifled, fostering an environment where groupthink thrives. Subordinates may hesitate to challenge their superiors, allowing flawed assumptions to move unchecked through the chain of command.

Additionally, these structures place immense accountability pressures on leaders, particularly in high-stakes scenarios. Leaders often prioritise how their actions will be perceived by external stakeholders, such as auditors or the public, over scrutinising their decisions. This focus can lead to favouring information that supports existing choices while ignoring evidence that could reveal weaknesses.

A striking example comes from the Australian Wheat Board. In the early 2000s, the organisation paid substantial kickbacks to the Iraqi government, violating UN sanctions. A 2006 inquiry revealed that groupthink and a collective desire for unanimity discouraged dissenting voices, allowing unethical and illegal strategies to persist.

Time Pressure and Limited Resources

Crises often impose intense time constraints and resource limitations, pushing decision-makers to rely on mental shortcuts, or heuristics, that reinforce familiar patterns rather than exploring new solutions.

Research by David Paulus at Delft University of Technology highlights how analysts frequently fail to correct biases, even when they are identified. The pressure to deliver quick outcomes often overshadows the need for accuracy:

Analysts fail to successfully debias data, even when biases are detected, and that this failure can be attributed to undervaluing debiasing efforts in favour of rapid results.

The 2014 West Africa Ebola epidemic provides a real-world example. High stakes and limited resources led to biased interpretations of data and impaired cognitive processes among decision-makers. The overwhelming flow of information in the early stages further reduced the ability to filter irrelevant details, entrenching existing biases.

Insular Decision-Making Groups

When leadership teams operate in isolation, they risk becoming echo chambers where biased perspectives go unchallenged. Such groups often prioritise harmony and consensus over critical evaluation, leading to self-censorship and the illusion of unanimity. Strong-willed leaders can unintentionally suppress dissent, as team members fear conflict or social exclusion.

Neurological studies show that confirming information activates the brain's reward centres, while disconfirming evidence causes discomfort. This natural inclination makes it easier for groups to maintain consensus rather than confront opposing viewpoints.

A case in point is Dick Smith Electronics. In January 2016, the company's leadership overestimated inventory turnover due to overconfidence and insular thinking. The resulting financial misjudgements led to the closure of over 300 stores and the loss of nearly 3,000 jobs.

Highly secretive or compartmentalised organisational structures exacerbate these issues by limiting external oversight. For example, in the lead-up to the 2003 Iraq War, the Bush administration selectively emphasised intelligence that supported the existence of weapons of mass destruction. Despite evidence from UN inspections and internal dissent within the CIA, fabricated claims from the "Curveball" informant were given undue weight because they aligned with the administration's pre-existing narrative. This insular decision-making process systematically excluded contradictory evidence, with devastating consequences.

How Leaders Can Counter Confirmation Bias

Leaders facing high-pressure situations require practical strategies to counter confirmation bias effectively. The techniques outlined below offer actionable steps to improve decision-making, even in the midst of a crisis.

Structured Decision-Making Methods

Using formal frameworks can help leaders challenge their assumptions and minimise bias. One such approach is the pre-mortem analysis, which involves imagining a future where a strategy has failed and then working backwards to identify potential causes. This process highlights risks that might otherwise be overlooked due to overconfidence or optimism. Another tool, the 12-Question Quality Control Checklist, developed by Kahneman, Lovallo, and Sibony, prompts leaders to examine recommendations for bias with questions like: Are there motivated errors driven by self-interest? or Have dissenting opinions and credible alternatives been fully considered?.

Decision support matrices are another effective tool, particularly under stress. These matrices use pre-determined criteria and weights to guide decisions, helping leaders stick to rational goals rather than emotional reactions. Research shows that individuals under low stress are 50% more likely to make logical decisions compared to those under high stress, underscoring the value of "cold state" planning. In a study of 71 national risk analysts, a single 40-minute debiasing intervention significantly reduced confirmation bias across both expert and novice participants.

While structured frameworks are crucial, fostering an organisational culture that encourages open challenge is equally important.

Creating Space for Dissent

Organisations often default to compliance, which can reinforce confirmation bias. Leaders must actively create environments where dissent is not only allowed but encouraged. Techniques like red teaming, where a designated individual or group challenges prevailing assumptions, ensure that alternative perspectives are heard.

The Three Theories Rule is another useful approach, requiring leaders to consider at least three possible explanations for a situation: the primary theory is correct, it is entirely false, or multiple factors are equally at play. Tim Ramsey MBE, Partner at Schillings, emphasises the importance of addressing bias:

You can have the best people in the room, but if bias is at play, you won't get the best outcome.

Building psychological safety is also critical. Leaders should openly commend those who challenge their views and acknowledge their own mistakes. For example, NASA's investigation into the 2003 Space Shuttle Columbia disaster revealed that a culture discouraging dissent prevented an engineer from raising critical concerns. To avoid similar outcomes, leaders can ask team members to present second and third choices for handling a situation, ensuring that credible alternatives are given due consideration.

Structured decision-making and fostering dissent are vital, but leaders must also remain vigilant to bias in real time.

Recognising Bias in Real Time

During a crisis, spotting confirmation bias as it happens is essential. The bias blind spot - the belief that one is less biased than others - can hinder self-awareness. As noted by the ERM Initiative Staff at NC State University:

Individuals cannot control their own biases and faulty logic, but humans have the ability to point it out in other people very well... the only hope for solid decision making is to do it in groups.

Aide memoires, or simple checklists, can help leaders identify and address bias during high-pressure moments. Questions like What will the other side say about this? or What role might we be playing in this problem? encourage critical thinking and disrupt automatic responses.

Leaders should also be mindful of the sunflower reflex, where team members defer to a leader’s opinion or try to align with perceived preferences. Allowing team members to speak later in discussions can encourage more genuine input. Research suggests that high confidence can amplify confirmation bias, making it harder to consider disconfirming evidence. Maintaining a sceptical stance towards initial conclusions is therefore crucial.

The UK Government's handling of COVID-19 provides a cautionary tale. The COVID-19 Inquiry highlighted how groupthink led to an unchallenged assumption that preparations for an influenza pandemic would suffice, neglecting the broader socio-economic impacts of a coronavirus-specific response.

By combining real-time vigilance with structured methods and open dissent, leaders can build a comprehensive defence against confirmation bias.

| Technique | Real-Time Application | Key Benefit |

|---|---|---|

| Pre-Mortem | Imagine the plan has failed; identify why | Identifies hidden risks and counters overconfidence |

| Red Teaming | Assign a "devil's advocate" to challenge assumptions | Prevents groupthink and fosters critical evaluation |

| Decision Matrix | Use preset weights to score options | Anchors decisions to logic during high stress |

| Aide Memoire | Use a checklist of bias-spotting questions | Provides a quick mental reset under pressure |

House of Birch integrates these methods into its bespoke leadership advisory services, equipping leaders to manage bias effectively in high-stakes scenarios.

Building Long-Term Defences Against Cognitive Biases

While managing biases in the moment is crucial during crises, long-term resilience requires systematic training, organisational culture shifts, and careful pre-crisis planning.

Crisis Simulations and Training

Structured training exercises help teams recognise and address biases under varied scenarios. For instance, a 2019 study by Anne-Laure Sellier, Irene Scopelliti, and Carey K. Morewedge demonstrated the effectiveness of debiasing interventions. Participants trained using the Carter Racing case were 29% less likely to exhibit confirmation bias compared to those without training.

Similarly, in 2022, a study involving 71 national risk analysts highlighted the impact of a 40-minute debiasing session. This training, integrated into a national conference, reduced confirmation bias in both seasoned analysts and a control group of master's students.

However, experts like Sandra Galletti and Steven B. Goldman from MIT argue that traditional crisis simulations often fall short. They recommend enhancing these exercises to prepare leaders for modern disruptions, which frequently involve cascading failures, unclear threats, and ethical dilemmas.

IBM's X-Force Incident Response and Intelligence Services (IRIS) offers a practical example with its "Cyber Range" training. In one simulation, participants investigated a ransomware-like scenario using the Dual Verification method. Teams were split: one to confirm a hypothesis and another to disprove it. Within 12 hours, a junior team member identified a rule change in detection software as the issue, avoiding misdiagnosis of a new outbreak. Christopher Crummey from IBM Security noted:

The strongest challenge is confirmation bias, when you tend to focus on evidence that supports a hypothesis and ignore the evidence that doesn't.

Effective simulations should also train participants in the SOS protocol - Stop, Oxygenate, Seek. This approach encourages pausing to gather information before making high-stakes decisions, counteracting the cognitive impairments caused by stress hormones like cortisol, which can affect decision-making for up to 20 minutes during intense crises.

These exercises not only build practical skills but also promote a culture of questioning and critical analysis.

Cultivating Critical Thinking

Encouraging critical thinking within organisations is essential. Calibration training, which aligns confidence levels with actual accuracy, is a skill that can be developed over time. As the Behavioural Insights Team explains:

Calibration is trainable. Like physical fitness, it can be built through habit, feedback, and repetition, without requiring innate brilliance.

Leaders play a pivotal role in fostering environments where questioning assumptions is normalised. Psychological safety - where team members feel comfortable voicing concerns or admitting errors - is key. Tim Ramsey MBE, a Partner at Schillings, highlights this:

The key to the best decisions is having all possible options on the table and a robust assessment of each one's strengths and weaknesses.

Red teaming, where a designated group challenges decisions and assumptions, is another tool for promoting critical thinking. This method helps counteract groupthink and ensures decisions are rigorously tested. Research also shows that individuals in low-stress environments are 50% more likely to make sound strategic choices compared to those under pressure.

Organisations must also address the "bias blind spot" - the belief that one is less biased than others. A 2022 study of national risk analysts found that even experts, who spent over a quarter of their work hours on risk analysis, were no less prone to this bias than novices. This underscores the need for deliberate cultural interventions, as expertise alone does not eliminate bias.

By embedding critical thinking into organisational norms, leaders can build a foundation for more objective decision-making.

Risk Assessment and Scenario Planning

Proactive risk assessments and scenario planning help organisations identify weaknesses and challenge assumptions before a crisis strikes. Decision Support Matrices (DSM), developed during calm periods, pre-define decision-making criteria, reducing the risk of impulsive choices during emergencies.

Scenario planning should include ambiguous and incomplete information, forcing teams to confront assumptions made under uncertainty. This approach uncovers "unknown unknowns" and prepares organisations for unpredictable challenges.

Aide memoires - structured checklists or question sets - can be developed during planning to systematically evaluate decisions and identify cognitive biases during a crisis. These tools are most effective when tailored to an organisation’s specific needs and integrated into existing frameworks.

By embedding these practices into their processes, organisations can build robust systems to mitigate confirmation bias and other cognitive pitfalls at every stage of crisis management.

House of Birch applies these principles in its leadership advisory services, equipping leaders with tailored training, cultural strategies, and pre-emptive planning to strengthen organisational resilience against cognitive biases.

Conclusion

Confirmation bias can cloud judgement, disrupt strategy, and hinder adaptability during crises. Adding to the complexity is the "bias blind spot" - the belief that one is less biased than others - which can affect even the most experienced professionals. As Stephen Hawking aptly noted:

The greatest enemy of knowledge is not ignorance, it is the illusion of knowledge.

Research underscores that expertise alone does not shield individuals from bias. However, deliberate interventions can make a difference. Leaders who define decision-making criteria in advance, encourage dissenting opinions, and take time to analyse situations carefully - even under pressure - can disrupt the cycle of unexamined biases.

Addressing these issues demands a cultural shift within organisations. Building resilience over the long term means embedding practices like crisis simulations, calibration exercises, and systematic risk assessments. These measures help teams identify and address biases before they lead to costly mistakes. Tim Ramsey MBE, Partner at Schillings, emphasises this point:

You can have the best people in the room, but if bias is at play, you won't get the best outcome.

In high-stakes scenarios, an external perspective can be invaluable. As highlighted earlier, engaging House of Birch's tailored advisory services offers leaders a neutral space to challenge assumptions, refine decision-making skills, and implement structured frameworks suited to their organisation's needs. These collaborations equip leaders with the mental discipline required to maintain focus and clarity when it matters most. By adopting these approaches, leaders can shift crisis management from reactive and biased to proactive and resilient.

FAQs

How can I spot confirmation bias in myself during a crisis?

To spot confirmation bias during a crisis, pay attention to behaviours such as focusing solely on information that aligns with your existing beliefs, dismissing evidence that challenges them, or twisting unclear data to fit your assumptions. Another sign is selective memory, where you might only remember details that reinforce your viewpoint. Identifying these tendencies allows for a more balanced evaluation of all available information, rather than just what supports your initial stance.

What’s the quickest way to challenge our crisis plan without slowing decisions?

The quickest way to evaluate a crisis plan while preserving decision-making speed is to promote structured dissent and embrace diverse viewpoints. This involves actively questioning existing assumptions, exploring alternative perspectives, and employing debiasing strategies such as documenting key beliefs for scrutiny or involving neutral external advisors. These approaches encourage critical thinking and create an environment of psychological safety, enabling leaders to address biases effectively without slowing progress.

How can you create safe dissent in a rigid hierarchy?

To encourage constructive dissent within a rigid hierarchy, leaders need to create an environment where team members feel secure in expressing differing viewpoints without fear of reprisal. This requires prioritising psychological safety, where individuals believe their contributions are respected and valued. Leaders can achieve this by fostering open discussions and demonstrating a willingness to accept challenges to their own ideas. Tools such as anonymous feedback mechanisms or organised debate sessions can help embed dissent into the organisational culture. These approaches ensure that diverse perspectives are not only heard but actively contribute to better decision-making, particularly in high-pressure situations.